AI Is the New Security Attack Surface

Artificial intelligence (AI) is transforming enterprise technology at unprecedented speed. As organizations embed AI into operational workflows and decision-making systems, security teams have focused on adversaries using AI to enhance attacks in every phase of the kill chain: from automated reconnaissance and vulnerability discovery to large-scale social engineering and fraud.

Research from organizations such as Microsoft, Google, and Anthropic confirms this shift is already well underway. Threat actors are actively experimenting with large language models (LLMs) to support malicious operations, including reconnaissance, vulnerability research, and influence activities. Adversaries leverage AI in increasingly sophisticated espionage operations targeting many organizations. Additionally, they are automating and scaling intrusion workflows, such as initial access and lateral movement, using AI-driven tooling.

But adversaries are also increasingly targeting AI technologies themselves. AI models, training pipelines, inference systems, and supporting infrastructure represent a rapidly expanding attack surface. As AI becomes embedded in business-critical functions, attacks against AI systems introduce new forms of operational and security risk that traditional security monitoring was not designed to detect.

Common Attack Techniques Targeting AI Systems

Threats such as prompt injection, model poisoning, data extraction, and model manipulation are no longer theoretical risks; they are actively researched and increasingly tested in production environments. Furthermore, AI systems introduce new technical dependencies and trust relationships that adversaries can exploit.

Organizations, including NIST, OWASP, and the MITRE Corporation through their ATLAS framework, have begun defining the scope of adversary attacks on AI and standardizing how we refer to these events. Such initiatives provide important structure, but the threat landscape is evolving faster than traditional reporting mechanisms can track.

Unlike more general information security and cybersecurity reporting, sources of information covering AI-focused attacks, and especially trends and similar in this ecosystem, are in some cases scarce and more generally dispersed across company specific blogs, occasional sector-specific media reporting, academic research papers, and social media posts. The result is a difficult arena for organizational decision makers and defenders to monitor for both the most critical, emerging attack vectors as well as general situational awareness within the space.

Within this space, several general lines of attack have emerged:

Prompt Injection and Model Manipulation

Prompt injection attacks manipulate model inputs to bypass safeguards or extract restricted information. These techniques can expose sensitive data, alter model behavior, or undermine trust in AI-driven systems. As generative AI becomes integrated into enterprise applications, prompt-based attacks represent one of the fastest-growing areas of AI security research.

Model Poisoning and Training Data Risks

Model poisoning introduces malicious data into training pipelines to influence model outputs or create hidden behaviors. Research has demonstrated that relatively small volumes of manipulated data can introduce persistent model behavior that may be difficult to detect through conventional testing.

AI Distillation Attacks

An emerging field for AI models essentially extracting information from other, more complete AI models, distillation attacks focus on replicating models as a form of industrial espionage. Primarily a threat for AI model developers, such attacks have the potential to enable significant, illicit transfer of valuable intellectual property.

AI Infrastructure and Data Exposure

Enterprise AI systems rely on complex infrastructure including APIs, storage environments, model repositories, and training pipelines. Misconfigurations or vulnerabilities within these components can expose models and training data to unauthorized access, creating new pathways for compromise.

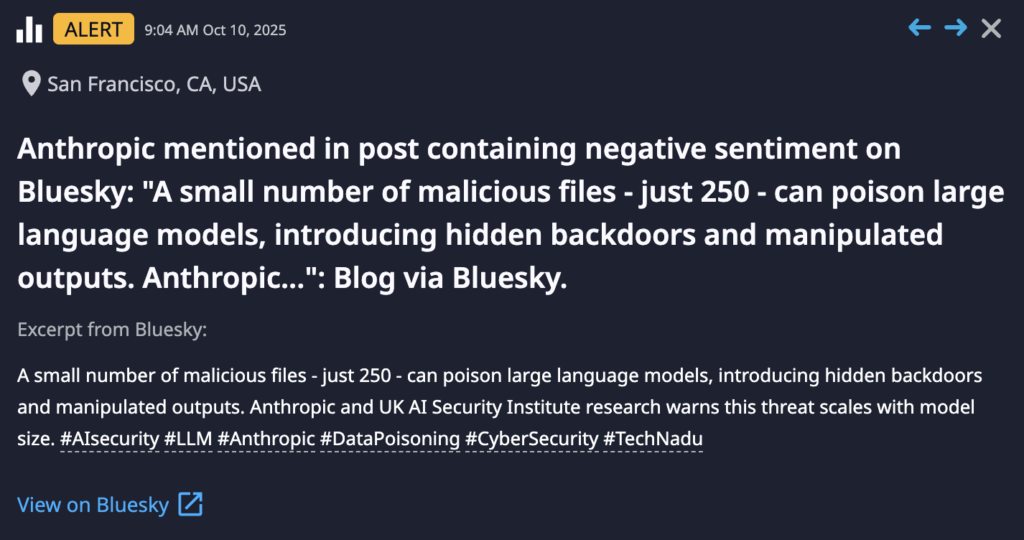

The above provides an example of content of interest for AI security purposes. This Dataminr alert, based off joint research led by Anthropic, highlights potential model poisoning through relatively small amounts of data. While not news of an in-the-wild adversary attack, the reporting highlights risks and potential attack scenarios on AI applications. Maintaining awareness of these developments is critical to evaluate security posture, assess risk and attack surface, and apply controls and defenses where possible.

Defending AI Requires Using AI to Your Advantage

As organizations rapidly embed AI into core applications, infrastructure, and decision-making processes, the threat landscape is evolving just as quickly. Without early and continuous visibility of AI ecosystem threats, organizations remain vulnerable and unaware of new malicious activity that could quickly spread across their environment.

Dataminr delivers a modern approach with new detection models purposefully designed to identify AI ecosystem threats across more than one million information sources. Dataminr delivers groundbreaking new AI threat detection capabilities through:

- Continuous monitoring of emerging threats across the AI ecosystem. Dataminr’s AI-native detection models analyze global digital signals to identify earliest-stage indicators of AI risk. Emerging attack techniques often surface in fragmented technical sources long before formal reporting, while proprietary vendor ecosystems limit independent visibility.

- Real-time correlation of threat signals to AI exposure and risk. Dataminr connects emerging external threat signals with relevant technologies and attack patterns, helping organizations identify where AI-related risks may affect their environment. Automated enrichment reduces manual research and accelerates investigation.

- Early detection and contextualization of new AI attack methods. Dataminr surfaces adversary experimentation and technical developments at their earliest stages, often before formal guidance exists. Agentic AI automatically assembles technical context so teams can quickly understand evolving risks.

- Decision-ready intelligence on the impact of AI threats. Dataminr transforms early indicators into actionable intelligence by enriching signals with technical relevance and operational context. Security teams can determine faster which emerging AI threats matter and what actions to prioritize.

- Scalable visibility across fragmented AI threat activity. Dataminr continuously monitors diverse digital sources spanning research communities, security reporting, and technical discussions. This always-on visibility eliminates coverage gaps and reduces the operational burden of manual monitoring.

To answer the critical need for insight into AI-focused attacks and threats within modern organizations, Dataminr has introduced AI-specific threat reporting. Leveraging existing technology in combination with expanded sourcing and AI threat-specific identifiers, Dataminr’s real-time alerting and reporting platform now gives decision makers the critical awareness and tracking necessary to identify emerging threats to critical AI technology, applications, and infrastructure. Emphasizing speed of delivery along with industry-leading agentic AI enrichment for context, Dataminr’s AI-focused threat identification enables the safe and secure deployment of emerging technologies in enterprise environments.

Dataminr for Cyber Defense

Transform intelligence into a preemptive cyber advantage from first signal to risk-prioritized action.

Learn More